Recent modelling indicates that multiple choice quizzes are “the most common and enduring form of educational assessment that remains in ...

You don’t need to look hard right now to see concerns about Aotearoa New Zealand’s education system.

A new partnership between University of Auckland and 12 local schools is aiming to boost Māori and Pacific University Entrance pass rates and further study success.

Eating an unhealthy breakfast might be just as harmful to students’ educational outcomes as skipping the morning meal entirely, new research has found.

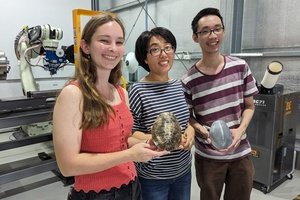

An innovative new program from Charles Darwin University endeavouring to encourage middle achievers to consider STEM-related courses, is aiming to benefit not only Year 11 and 12 students, but also their slightly older postgrad university peers.

A $900,000 investment will see Victorian students learn about menstrual health and pelvic pain from next year, with free sessions set to be delivered across Years 5 to 10.

Almost 500,000 illegal vapes have been seized in the largest single operation of its kind in Australian history.

Thousands of teachers from more than 80 public schools across Western Australia stopped work on Tuesday morning, resulting in 22 schools closing fully and 62 remaining partially open.

Media-savvy ‘knowledge brokers’ from influential US think tanks are wielding incredible influence on education policy, despite having little expertise in the areas they speak on.

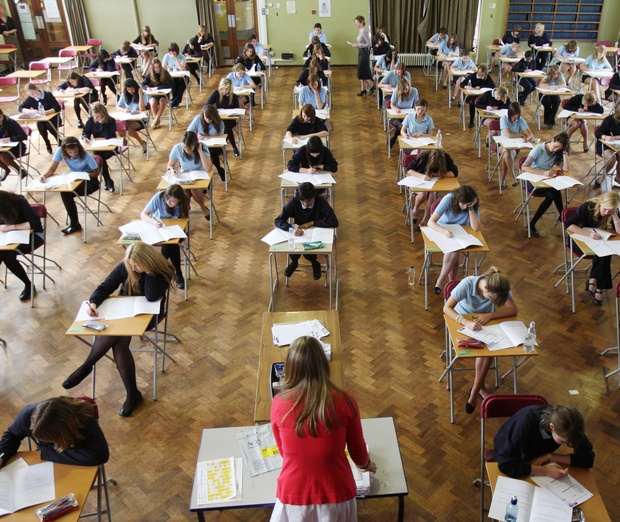

Assessment has always been a critical aspect of the teaching and learning cycle with ideas around the frequency and best forms of assessment explored from a range of educational perspectives.

A string of academics have come out in force to warn against a push by the NSW Department of Education for explicit teaching across all schools.